A simple average of the region is used to fill in the missing values. RETURN distinct 'normalization done' Missing valuesĪs we observed at the start, some features of the countries have missing values. (toFloat(c1) - min) / (max - min) as normalized_valueĬALL (c1, newKey, normalized_value) It is better known as a process of feature scaling or data normalization. MinMax normalization is used to scale all values of features between 0 and 1. On the other side of the spectrum, I found it shocking that Angola has (not sure exactly which year) 191,19 infant deaths per 1000 birth. I found it interesting that Monaco has 1035 phones per 1000 persons and so more phones than people. WITH potential_feature, (c,) as statsĪ(stats.`0.99`,2) as p99 Results UNWIND ["Birthrate", "Infant mortality (per 1000 births)","GDP ($ per capita)", Let’s check basic statistics about our features with procedure. I cherry-picked a couple of features that have little to no missing values, specifically: We don’t use all the features of countries that are available in our analysis. WITH c, key, toFloat(replace(c,',','.')) as fixed_floatĬALL (c, key, fixed_float) YIELD node Let’s replace the commas for dots and store the new values as floats. This doesn’t work for us and we need to replace the commas with the dots to be able to store them as a float. The numbers in this dataset use the comma as a decimal point. I later found out that this is a Java limitation and not specifically Neo4j. If we run the following cypher query RETURN toFloat("0,1") it returns a null value. After a couple of minutes, I found the culprit. When I first explored this dataset in Neo4j I got back weird results and didn’t exactly understand why. Import query LOAD CSV WITH HEADERS FROM "file:///countries%20of%20the%20world.csv" as row We could define unique constraints for labels Country and Region, but since our graph is so tiny we’ll skip this today and proceed with the import. They have some features stored as properties and are also connected to the to the region they belong in with a relationship. We have one type of nodes representing countries. Features with more than five percent of missing values (11+) are not considered in our analysis. Fortunately, only five features have some missing values while others have close to zero. ORDER BY missing_value DESC LIMIT 15 Missing values resultsĪs expected with any real-world dataset there are some missing values. Sum(CASE WHEN row is null THEN 1 ELSE 0 END) as missing_value Missing values query LOAD CSV WITH HEADERS FROM "file:///countries%20of%20the%20world.csv" as row Let’s start by researching how many missing values are there in the CSV file.Ĭountries of the world.csv file must be copied to the Neo4j/import folder to be able to run the missing values query.

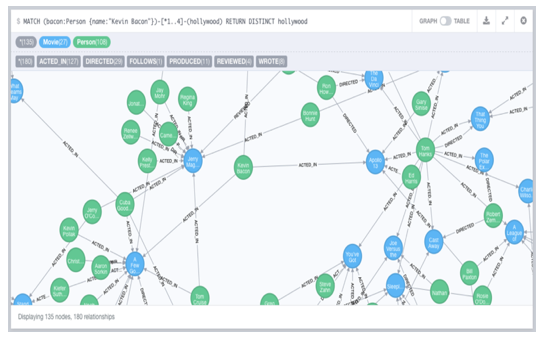

It contains information such as birthrate, GDP, infant mortality and others about 227 countries of the world. We use Countries of the World dataset, made available by Fernando Lasso on Kaggle. If for example you have only read rights to Neo4j or you don’t want to store anything to the graph while analyzing it, then chaining algorithms is for you.Īs I used a new dataset to play around, the post also shows how to import and preprocess the graph for the analysis. Want to learn more about graph databases and Neo4j? Click below to get your free copy of O’Reilly’s Graph Databases ebook and discover how to use graph technologies for your mission-critical application today.The idea for this blog post is to show how to chain similarity algorithms with community detection algorithms in Neo4j without ever storing any information back to the graph. RETURN row.name as name, sum(row.hoursPerWeek) as hoursPerWeek After this, we use the WITH clause to deconstruct the maps into columns again and perform operations like sorting, pagination, filtering or any other aggregation or operation. Once we have the complete list, we use UNWIND to transform it back into rows of maps. Combining the lists is a simple list concatenation with the “+” operator. UNWIND transforms the list back into individual rows.įirst we turn the columns of a result into a map (struct, hash, dictionary), to retain its structure.įor each partial query we use the COLLECT to aggregate these maps into a list, which also reduces our row count (cardinality) to one (1) for the following MATCH. COLLECT collects values into a list (a real list that you can run list operations on). How can we resolve this issue? By using COLLECT and UNWIND as a power-combo. That is because it sorts the first query and then sorts the second query and combines the results. “Ashton” comes before “Amber” in the output. As you can see in the output, sorting is not done correctly.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed